Originally published as a LinkedIn article by Ricky Solanki

The problem wasn't AI failure. It was AI success at a scale nobody had modelled for. Most marketing teams treat AI as a fixed cost. A subscription. A monthly line item.

That's a fundamental misunderstanding of how AI economics actually work. AI spend isn't flat. It's variable, granular, and directly tied to how you structure and deploy workflows.

The unit of cost is the token, and the difference between an optimised AI operation and a wasteful one can be 10 to 20 times the budget for identical outputs.At Push, we call this AI tokenomics, borrowing the term from cryptocurrency but applying it to the economics of token usage in AI systems. It's the commercial discipline of understanding, tracking, and optimising token-level costs across AI marketing workflows.

If you're scaling AI beyond pilot projects, tokenomics is the cost conversation you need to be having. Here's why it matters, where the waste hides, and how to fix it.

What is a token in AI?

DEFINITION: What is a token in AI?

A token is the unit AI models use to process text. It represents roughly four characters, or about three-quarters of a word. Both the input (your prompt) and the output (the model's response) are measured in tokens. Token costs vary by model tier and provider.

When you send a prompt to an AI model, it doesn't read words the way humans do. It processes tokens. A token is roughly four characters of text, or about three-quarters of a word. A short prompt might use 50 tokens. A complex brief with context and examples might use 50,000.

Every token has a price. That price depends on:

- Which AI provider you're using (OpenAI, Anthropic, Google, etc.)

- Which model tier you've selected

- Whether the token is part of the input (your prompt) or output (the model's response)

For marketing teams running AI at scale, generating hundreds or thousands of assets per week, token costs add up fast. The teams who understand this operate at a fraction of the cost of those who don't.

As one reader put it: "No such thing as a free lunch!" when it comes to AI costs.

Why AI tokenomics matters

DEFINITION: What is AI tokenomics?

AI tokenomics (a phrase that I coined at Push) is the commercial discipline of understanding, tracking, and optimising token-level costs across AI marketing workflows. The term adapts "tokenomics" from cryptocurrency (where it describes token distribution models) and applies it to AI operations, emphasising that AI costs are variable and usage-based rather than fixed subscriptions.

At Push, we coined the term "AI tokenomics" to describe what we were seeing across client operations: massive, invisible cost variance driven by how teams structured their AI usage. The crypto world uses "tokenomics" to describe token distribution models. We're using it to describe something more immediately practical: the economics of AI token consumption at scale.

The major AI providers now offer models across three capability tiers, each priced very differently:

Frontier models (high complexity)

These handle complex reasoning, nuanced long-form writing, and tasks requiring genuine judgement. Examples: GPT-4, Claude Opus, Gemini Ultra.

Cost: £10-15 per million input tokens, £30-75 per million output tokens.

Mid-tier models (workhorses)

These handle the majority of marketing tasks well: drafting, summarising, reformatting, moderate reasoning.

Cost: £1-3 per million input tokens.

Light models (fast and cheap)

These suit classification, tagging, simple reformatting, and routing decisions.

Cost: Under £1 per million input tokens.

DEFINITION: Model tiers in AI

AI providers offer three model tiers with different capabilities and costs:

- Frontier models: Complex reasoning and nuanced writing (£10-15 per million input tokens)

- Mid-tier models: General marketing tasks like drafting and summarising (£1-3 per million tokens)

- Light models: Classification, tagging, and simple formatting (under £1 per million tokens)

Here's where AI tokenomics becomes a commercial discipline.

Running 10,000 product descriptions through a frontier model versus a mid-tier model is the difference between a £500 task and a £30 task. In most cases, a human reader cannot distinguish the outputs.

That 16x cost difference is the gap most marketing teams are sitting in without realising it.

The real-world cost of ignoring tokenomics: the Uber case

Uber's experience demonstrates what happens when token economics aren't understood at the planning stage.

The company rolled out Claude Code access to its engineering teams in December 2025. It wasn't a planned rollout. It was pull-driven adoption. Engineers discovered the tool worked and usage exploded.

The adoption curve

- December 2025: 32% of engineers using Claude Code

- February 2026: 63% usage

- April 2026: 95% of engineers using AI tools monthly

The productivity gains were real:

- 70% of committed code now originates from AI tools

- 11% of pull requests opened by AI agents

- 1,800 AI-generated code changes per week flowing into production

The cost impact:

By April 2026, the entire annual AI budget was exhausted. Just four months into the year. Uber has roughly 5,000 engineers and a £3.4 billion R&D budget.

If a company at that scale with dedicated budget planning teams can underestimate AI costs by enough to blow through a full year's allocation in one fiscal quarter, the problem is structural.

The issue wasn't that Uber used AI badly. It's that they modelled it as a per-seat subscription cost when the actual economics are usage-based and exponential when adoption succeeds.

Where AI marketing budgets leak

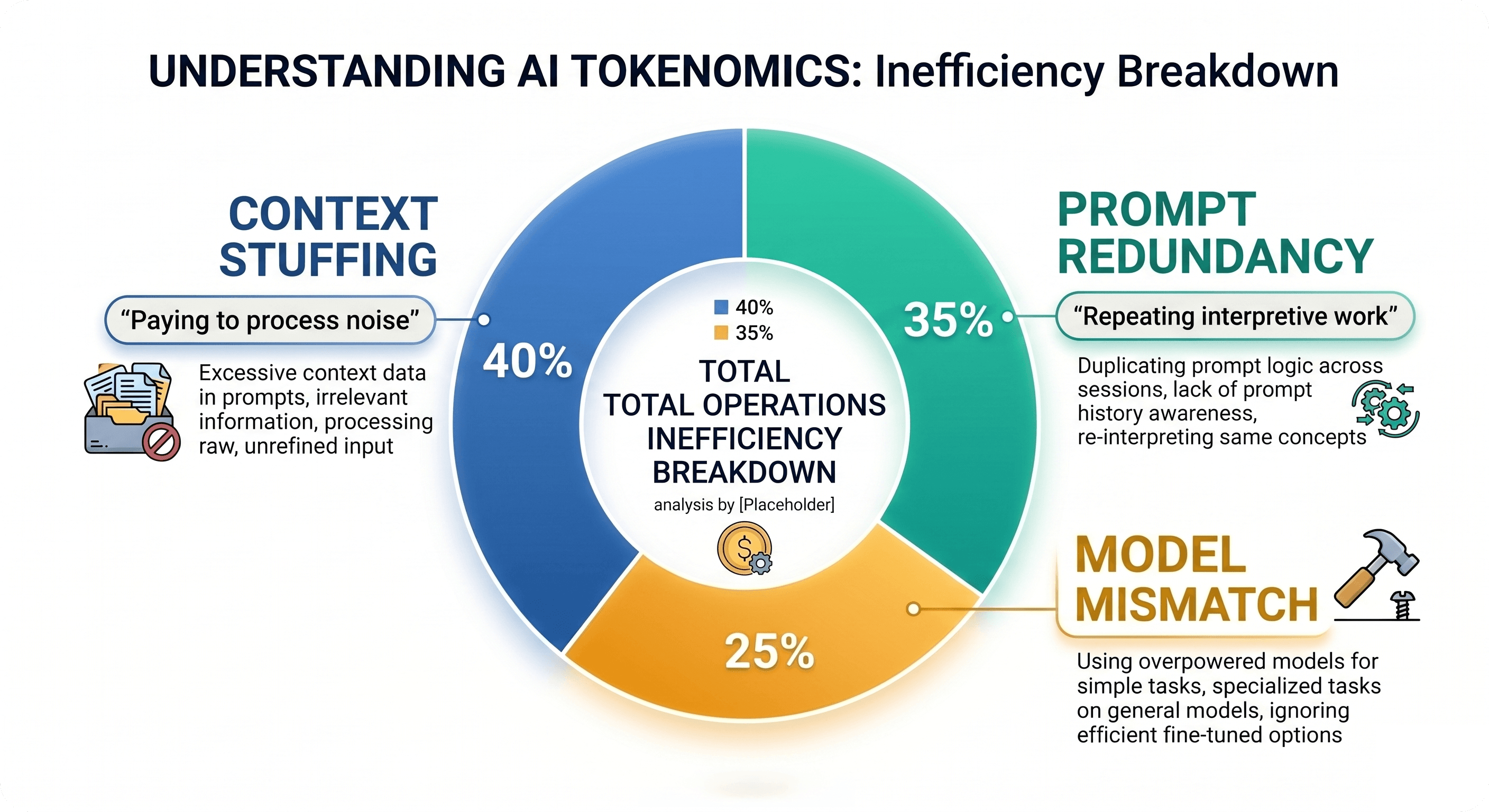

We've now audited token usage across dozens of marketing operations. The waste tends to cluster in three places.

1. Context stuffing

Teams paste enormous amounts of background information into every prompt because more context sounds like it should produce better outputs. Often it doesn't.

If most of what's in the context window isn't directly relevant to the task, you're paying to process noise. Every unnecessary sentence in a prompt costs tokens. Multiply that across thousands of tasks per month, and the waste compounds fast.

As Cim Dahir, AI Marketing Account Director at Push, observed:

"Funny how when you first start you're told giving AI as much context as possible is critical, once you get comfortable you suddenly have to start being so conscious of what context you're giving!"

That's the trap. Initial advice pushes maximum context. Mature usage requires surgical precision.

2. Prompt redundancy

Poorly engineered prompts make the model do interpretive work repeatedly that should have been structured once in a proper template.

Every poorly built prompt is a small tax. Across thousands of campaign assets, that tax becomes material.

3. Model mismatch

Not every task needs your most powerful model. But most teams default to their top-tier option for everything because it's easier than building a decision framework. The price difference between getting that decision right and getting it wrong is substantial at volume.

We saw this pattern with a client about eighteen months ago. They'd built out an ambitious AI content operation. Automated briefing, AI-assisted drafting, scaled asset production across multiple markets. It looked sophisticated on paper.

When we got under the bonnet, the token spend was all over the place. They were running every single task, from a one-line product title refresh to a complex audience segmentation brief, through their most expensive model.

Nobody had framed it as a cost question.

How we lived this at Push

At Push, we didn't just observe the AI tokenomics problem with clients. We experienced it firsthand. When we became an AI-first agency, we encouraged every team member to lean into the tools. No restrictions. No gatekeeping. We wanted genuine adoption, not performative pilots.

It worked. Adoption went through the roof. Then the credits started running out. Teams were hitting their monthly limits mid-month. People were asking colleagues if they could "borrow" credits to finish urgent client work. The demand signal was clear: AI had become essential infrastructure, not optional tooling.

Nemash Patel, Marketing Strategist at Push, described the moment:

"I genuinely felt like someone with an addiction asking colleagues 'can you spare some credits?' Mid-month. Multiple times. Not my proudest moment! That's when we knew we had a tokenomics problem, not a usage problem."

The solution wasn't to restrict usage. It was to treat AI spend like any other critical operational cost.

We renegotiated with our AI providers to secure larger credit allocations at better rates. We mapped workflows to model tiers so routine tasks ran on cheaper models and complex reasoning stayed on frontier ones. And we audited our agents and prompts to make them more token-efficient.

We used AI to optimise AI spend.

The result? Teams operate faster than before, but our cost-per-output dropped by roughly 40% compared to our initial unstructured usage period. Suddenly the same work that burned through credits in two weeks was lasting the full month. Turns out the solution to AI cost problems is… better AI usage.

We've since implemented the same framework with clients facing identical challenges. The pattern is consistent: encourage adoption, then optimise ruthlessly once you understand actual usage.

Most organisations do it backwards. They try to control costs before they understand value. That kills adoption. The teams who win let usage grow, measure it properly, then engineer efficiency once they know where the waste sits.

How to fix it: an AI tokenomics framework for marketing teams

The solution isn't complicated. It requires treating AI spend with the same commercial rigour you'd apply to any other media or production channel.

Treat prompts as infrastructure

A well-engineered prompt is a business asset. It should be:

- Documented

- Tested

- Improved over time

- Used consistently, not rewritten from scratch each time

This is core to how we approach AI implementation strategy with clients. Prompts should be version-controlled and treated like code, not disposable instructions.

Match models to tasks deliberately

Most enterprise AI platforms now give you a range of model options. A mature operation should have a clear view of:

- Which tier is appropriate for which job

- When to audit that decision as model capabilities and pricing shift

Ask: does this task require reasoning, or just formatting? Does it need creativity, or consistency? The answer determines which model you should use.

Build feedback loops

Which prompts produce clean outputs on the first pass? Which ones require three attempts before they're usable?Iterations cost tokens. Cutting them is both a quality improvement and a direct cost saving.

Track:

- First-pass success rate by prompt

- Average token usage per task type

- Cost per output by workflow

These metrics surface inefficiency fast.

For teams looking to build internal capability around these principles, our AI training programmes cover prompt engineering, workflow optimisation, and cost management frameworks.

The AI tokenomics adoption curve

Most teams see AI costs rise and panic. They restrict access, add approval gates, or pause rollout entirely. The cost curve flattens, but so does adoption. You've just killed momentum to save money you should have been spending.

The teams who understand AI tokenomics let the cost curve rise during genuine adoption. They measure properly. Then they intervene with structure: model tier mapping, prompt optimisation, workflow audits. That's when cost-per-output drops while productivity continues to climb.

At Push, the inflection point came when teams were borrowing credits mid-month. That wasn't a crisis. It was a signal. We were past the adoption phase and ready for the optimisation phase.

The difference between winning and losing isn't whether your AI costs rise initially. It's whether you know when and how to optimise once they do.

What AI tokenomics means for your marketing operation

KEY TAKEAWAYS: AI Tokenomics for Marketing Teams

- AI costs are variable, not flat. Token usage depends on prompt design, model selection, and task complexity.

- The cost gap between model tiers is 10-20x for equivalent outputs. Most teams waste budget using expensive models for simple tasks.

- Prompts are infrastructure. Treat them as reusable assets, not disposable instructions. Document, test, and version-control them.

- Track cost-per-output, not just total spend. Monitor first-pass success rates, average tokens per task, and cost per workflow.

- Encourage adoption first, optimise second. Controlling costs before understanding value kills genuine AI integration. Let usage grow, measure properly, then engineer efficiency.

- Real-world impact: Uber exhausted their 2026 AI budget in four months. Push reduced cost-per-output by 40% through structured optimisation.

FAQ: Common questions about AI tokenomics

What is a token in AI?

A token is the unit AI models use to process text. It's roughly four characters, or about three-quarters of a word. Both your prompt (input) and the model's response (output) are measured in tokens.

How much do tokens cost?

Token costs vary by provider and model tier. Frontier models cost £10-15 per million input tokens. Mid-tier models cost £1-3 per million. Light models cost under £1 per million.

How do I know which AI model to use?

Match the model to the task. Use frontier models for complex reasoning and nuanced writing. Use mid-tier models for drafting, summarising, and moderate reasoning. Use light models for classification, tagging, and simple reformatting.

Can I reduce AI costs without sacrificing quality?

Yes. Most teams overspend by using expensive models for tasks that don't require them. Auditing token usage and matching models to tasks typically cuts costs by 50-80% with no quality loss.

What is AI tokenomics?

AI tokenomics (a term coined by Ricky Solanki at Push, adapted from cryptocurrency terminology) is the commercial discipline of understanding, tracking, and optimising token-level costs across AI workflows. Unlike crypto tokenomics (which describes digital currency distribution models), AI tokenomics focuses on the unit economics of AI model usage. It's how you turn AI from an uncontrolled expense into a managed, efficient operation.

How did Uber burn through their AI budget so quickly?

Uber gave 5,000 engineers access to Claude Code in December 2025. Usage doubled by February. They modelled AI as a per-seat cost when the actual economics are usage-based. By April 2026, the entire annual budget was exhausted.

What's the biggest mistake companies make with AI costs?

Trying to control costs before understanding value. This kills adoption. The pattern that works: encourage usage, measure properly, optimise once you see where the waste sits.

Who coined the term "AI tokenomics"?

Ricky Solanki, co-founder of Push, coined the term "AI tokenomics" to describe the discipline of managing token-level costs in AI operations.

While "tokenomics" originates from cryptocurrency (describing token distribution economics), Solanki repurposed it for AI systems to emphasise that AI spend behaves like variable, usage-based costs (similar to media buying) rather than fixed software subscriptions.

AI tokenomics: the next commercial discipline for marketing

AI tokenomics - the framework we've developed at Push for understanding and optimising token-level costs, is becoming a commercial discipline every marketing leader needs to understand.

The crypto world coined "tokenomics" for token distribution models. We're claiming it for AI operations because the parallel is apt: both are about understanding unit economics in systems where costs are variable, usage-based, and easy to misunderstand until you're deep in the red.

As CEO Iztok Smolic noted when reviewing this framework:

"Congrats on recognising the issue and taking the right measures to fix it!"

As AI moves from pilot projects to production infrastructure, every CMO will need to speak this language. The teams who understand it now will build commercial advantages that compound over time. The question isn't whether AI tokenomics becomes standard practice. It's whether you adopt it before or after you've burned through your budget.

Want to understand where your AI budget is actually going?

AI tokenomics isn't optional anymore. It's the next commercial discipline every CMO and marketing operations leader needs to understand. At Push, we run AI operations audits that map token usage across workflows, identify cost-saving opportunities, and build optimisation frameworks, all without compromising output quality.

Get in touch to book an AI operations audit →

About the Author

Ricky Solanki is co-founder of Push, the UK's leading AI-first digital marketing agency. He coined the term "AI tokenomics" to describe the discipline of managing token-level costs in AI operations, adapting the cryptocurrency term for practical application in marketing and business contexts.

Push won Best Use of AI at the UK Agency Awards 2025 and Best Use of AI in Search at the UK Search Awards 2025. The agency specialises in AI adoption, implementation, and operations optimisation for medium-sized UK businesses.

Connect with Ricky

Frequently Asked Questions

What is an AI-native graduate, and how are they different from a digital native?

A digital native is comfortable with modern apps and interfaces, while an AI-native knows how to collaborate with AI systems to solve problems and improve outcomes. They can guide AI, evaluate outputs critically, and decide what should be automated versus owned by humans.

Why are graduate jobs declining in the UK, and what does AI have to do with it?

Graduate vacancies have fallen as employers redesign entry-level roles around automation and AI-enabled workflows. Many tasks that used to train juniors are changing, so employers increasingly prioritise candidates who can operate effectively in AI-shaped environments.

What skills should employers look for when hiring AI-ready graduates?

Look for evidence of AI fluency plus human strengths like critical thinking, curiosity, communication, and teamwork. Strong candidates can show responsible AI use, good judgment when outputs are wrong, and the ability to learn fast as tools and processes evolve.

How can businesses use AI-native graduates in marketing without losing human creativity and control?

AI can accelerate campaign planning, creative testing, bid optimisation, and performance analysis, but humans must set strategy and make final judgment calls. The best results come from pairing AI efficiency with brand understanding, cultural context, and clear accountability.

What are the risks of stopping entry-level hiring because AI can do junior tasks?

Cutting early-career hiring breaks the talent pipeline, leaving organisations without future senior leaders who understand the business. Hiring graduates who can add value alongside AI protects long-term capability while enabling faster adaptation today.